Each C program consists of various tokens. A token can be either a keyword, an identifier, a constant, a string literal, or a symbol. We use Lexical Analysis to convert the input program into a sequence of tokens and for detection of different tokens.

Code

/* Decalring two counters one for number

of lines other for number of characters */

%{

int keywordCount = 0;

int identifiersCount = 0;

int specialCharCount = 0;

int constantsCount = 0;

int punctuationCount = 0;

%}

/**rule 1 counts the number of lines, rule 2 counts the number of characters and rule 3 specifies when to stop taking input **/

%%

end return 0;

bool|int|float printf("Keyword\n"); keywordCount++;

[-,+]?[0-9]+ printf("Constants\n"); constantsCount++;

[,.'", ;]+ printf("Punctuation Chars\n"); punctuationCount++;

[!@#$%^&*(){}=]+ printf("Special Chars\n"); specialCharCount++;

[_,a-zA-Z]+ printf("Identifiers\n"); identifiersCount++;

%%

/* User code section***/

int yywrap(){}

int main(int argc, char **argv)

{

yylex();

printf("Keywords: %d\nConstants: %d\nPunctuations: %d\nSpecial characters %d\nIdentifiers: %d\n", keywordCount, constantsCount, punctuationCount, specialCharCount, identifiersCount);

return 0;

}

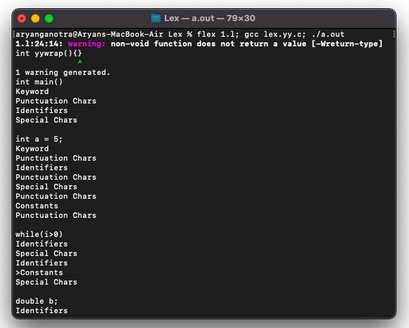

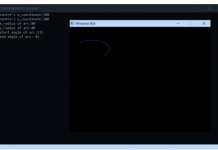

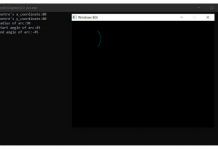

Output

Visit now for more such posts – Codingee.com

Learn more about Lexical Analysis.

![Pepcoding : A platform to learn coding [it’s FREE!!] pepcoding-logo](https://codingee.com/wp-content/uploads/2021/05/logo-218x150.png)

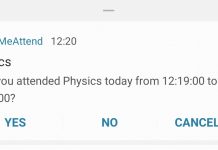

Detect different tokens in a C program.